Biography

“One should always be a little improbable.”

– Oscar Wilde

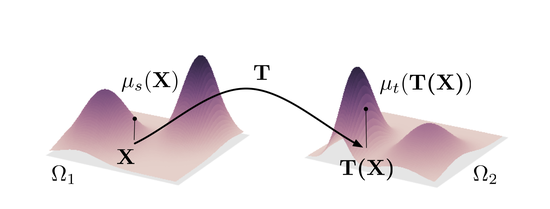

Keywords: Optimal transport, Statistical learning, kernel methods, manifold and geometric approaches, optimal control

Recurrent collaborators

- Rémi Flamary (CMAP, Ecole Polytechnique)

- Devis Tuia (EPFL)

- Alain Rakotomamonjy (Criteo AI Lab)

- Ievgen Redko (University Jean-Monnet)

- All the folks from the Obelix team

- many more !

Software

- POT The python Optimal Transport Toolbox

On-going PhD Students

- Clément Bonet (PhD 2020–2023) with François Septier and Lucas Drumetz

- Quang Huy Tran (PhD 2021–2024) with Rémi Flamary and Karim Lounici (CMAP - X)

- Rémi Cornillet (PhD 2021–2024) with Jérémy Cohen (CREATIS - Lyon)

- Renan Bernard (PhD 2021–2024) with Chloé Friguet and Valérie Garès (INSA - Rennes)

- Paul Berg (PhD 2021–2024) with Minh-Tan Pham

- Bjoern Michele (CIFRE PhD 2022–2025) with Valeo.AI

Recently defended PhD thesis:

- Titouan Vayer (PhD 2020) with Laetitia Chapel and Romain Tavenard (now at INRIA)

- Kilian Fatras (PhD 2021) with Rémi Flamary (Post-doc at MLIA)

Teaching

I mostly teach computer and data science at Université Bretagne Sud. Among others, I give the following courses:

I also teach in the Master Erasmus Mundus Copernicus CDE in the GeoData Science track.

Positions

We offer some positions at Obelix for master internships, PhD positions or post-docs. Consult the corresponding webpage. Also, if you are interested in doing research with me, and if you have an excellent track record but do not find a suitable announce, feel free me to contact me or use contact widget !