DeepComics: Saliency estimation for comics

Kévin Bannier1 Eakta Jain2 Olivier Le Meur 1

1Univ Rennes, CNRS, IRISA, France

2University of Florida, Gainesville, US

Eye Tracking and Research Application, ETRA

Abstract

A key requirement for training deep learning saliency models is large training eye tracking datasets. Despite the fact that the accessibility of eye tracking technology has greatly increased, collecting eye tracking data on a large scale for very specific content types is cumbersome, such as comic images, which are different from natural images such as photographs because text and pictorial content is integrated. In this paper, we show that a deep network trained on visual categories where the gaze deployment is similar to comics outperforms existing models and models trained with visual categories for which the gaze deployment is dramatically different from comics. Further, we find that it is better to use a computationally generated dataset on visual category close to comics one than real eye tracking data of a visual category that has different gaze deployment. These findings hold implications for the transference of deep networks to different domains.

Supplementary materials

- Gaze data overlaid on these sample comics images (Top row). Colored circles represent the gaze data of the five observers. Saliency map computed

from gaze data (Bottom row).

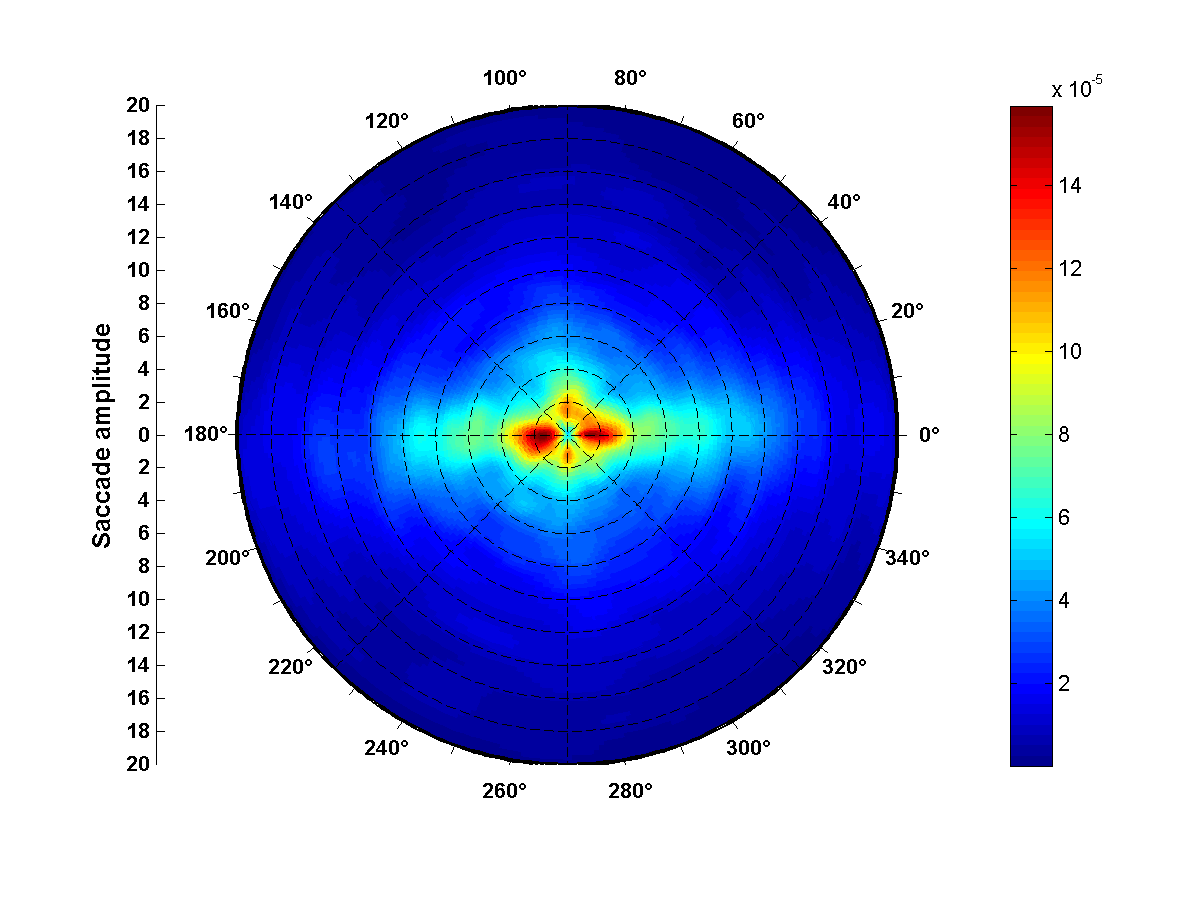

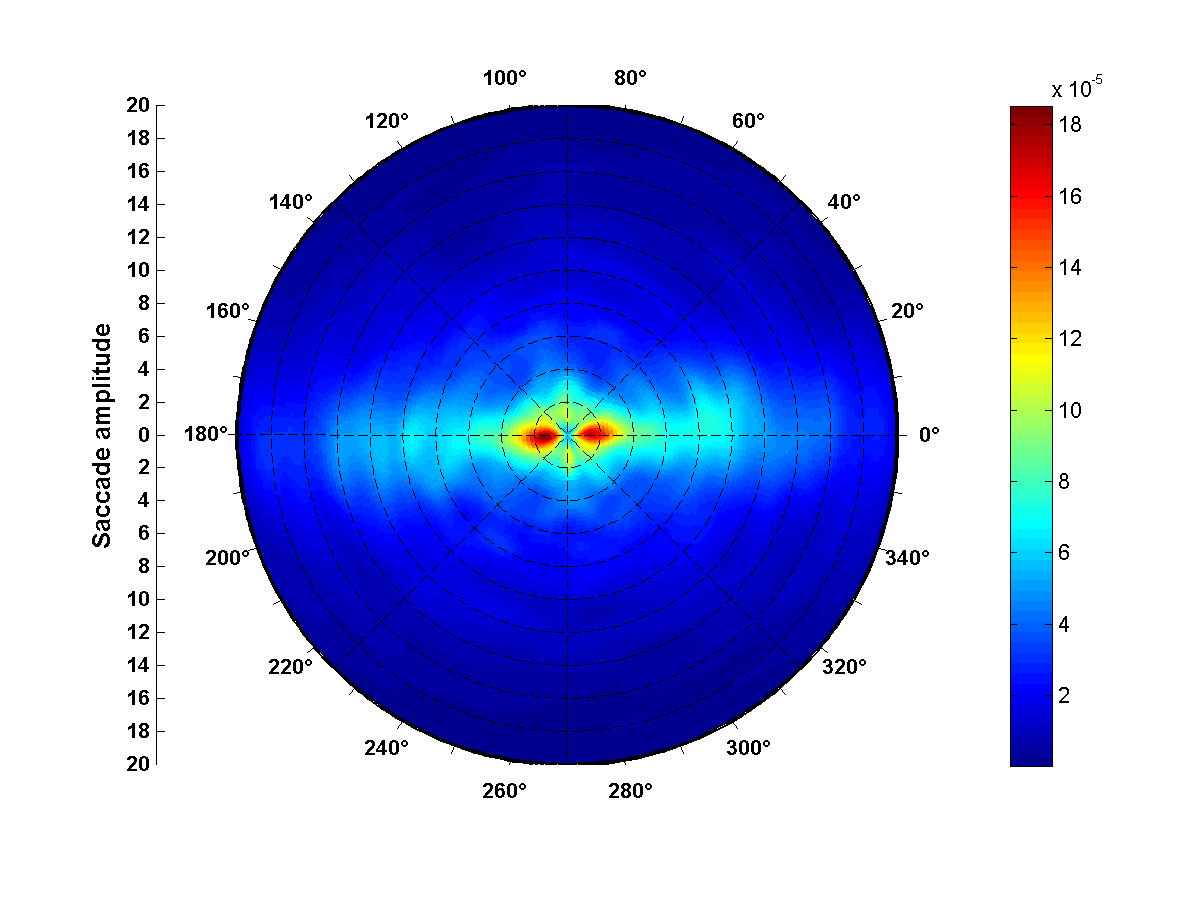

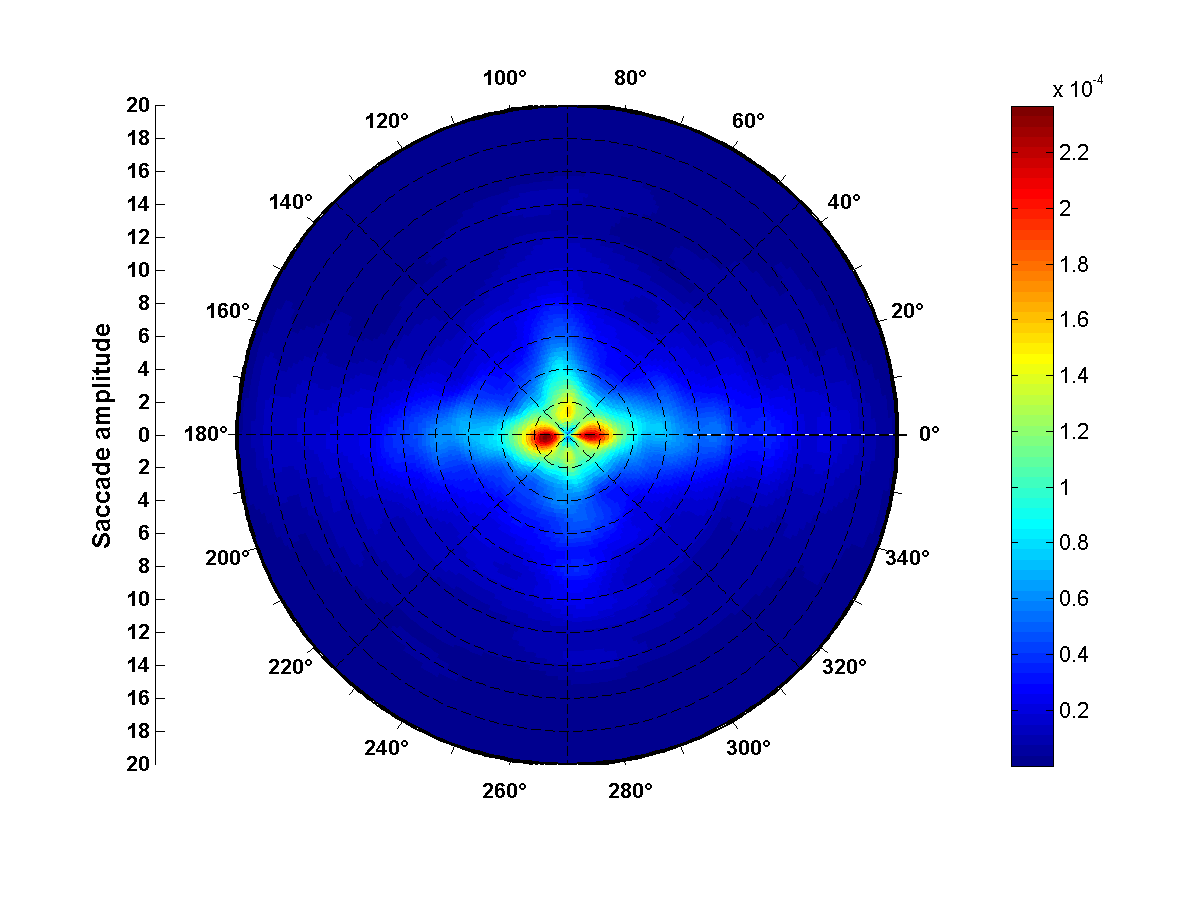

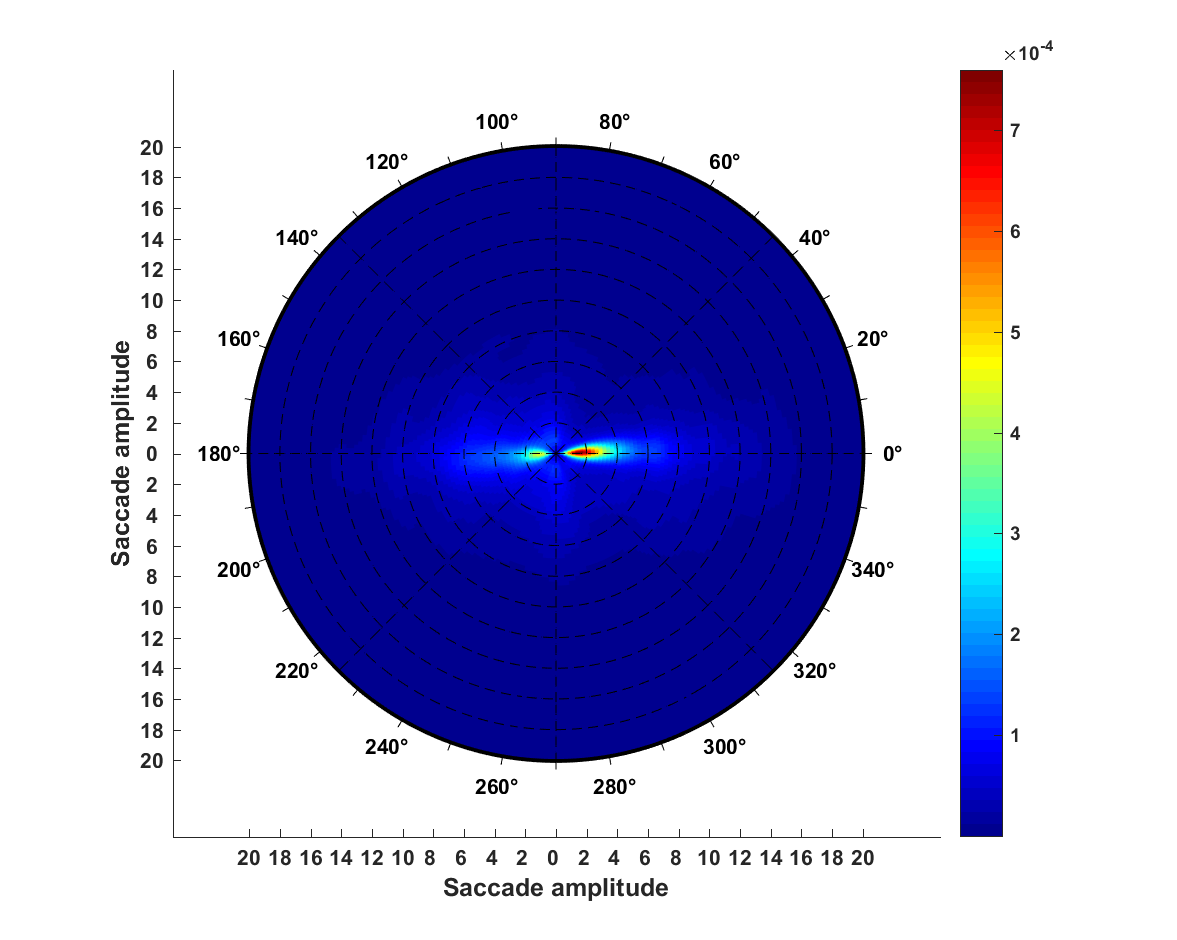

- Joint distribution of saccade amplitude and saccade orientation for Art, Cartoon, Sketch and Webpages categories:

Art

Cartoon

Sketch

Webpages

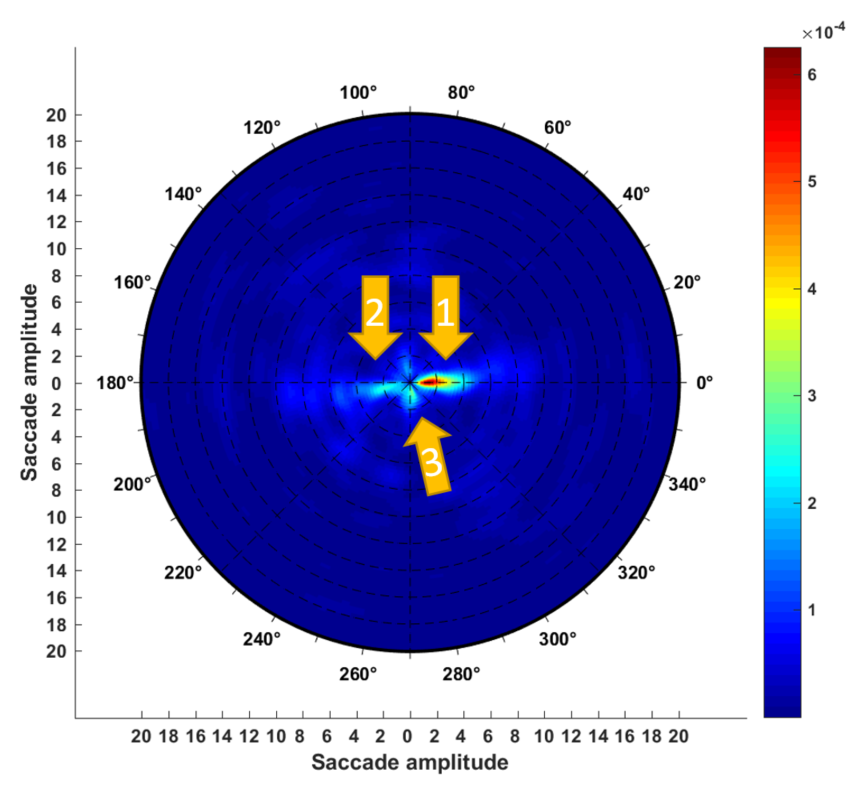

- Joint distribution of saccade amplitude and saccade orientation for Comics category:

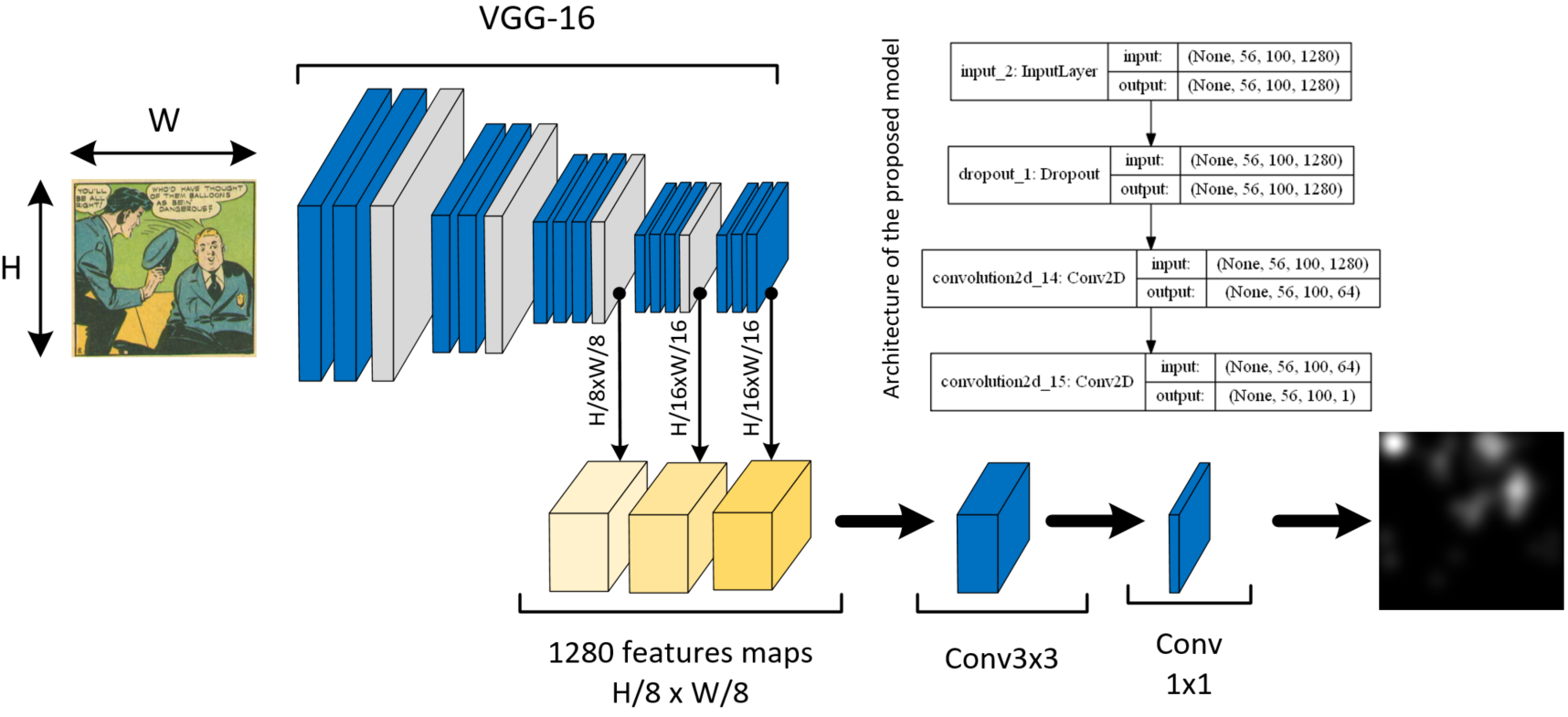

- Architecture of the model based on DeepGaze II and ML-NET network (see references in the paper):

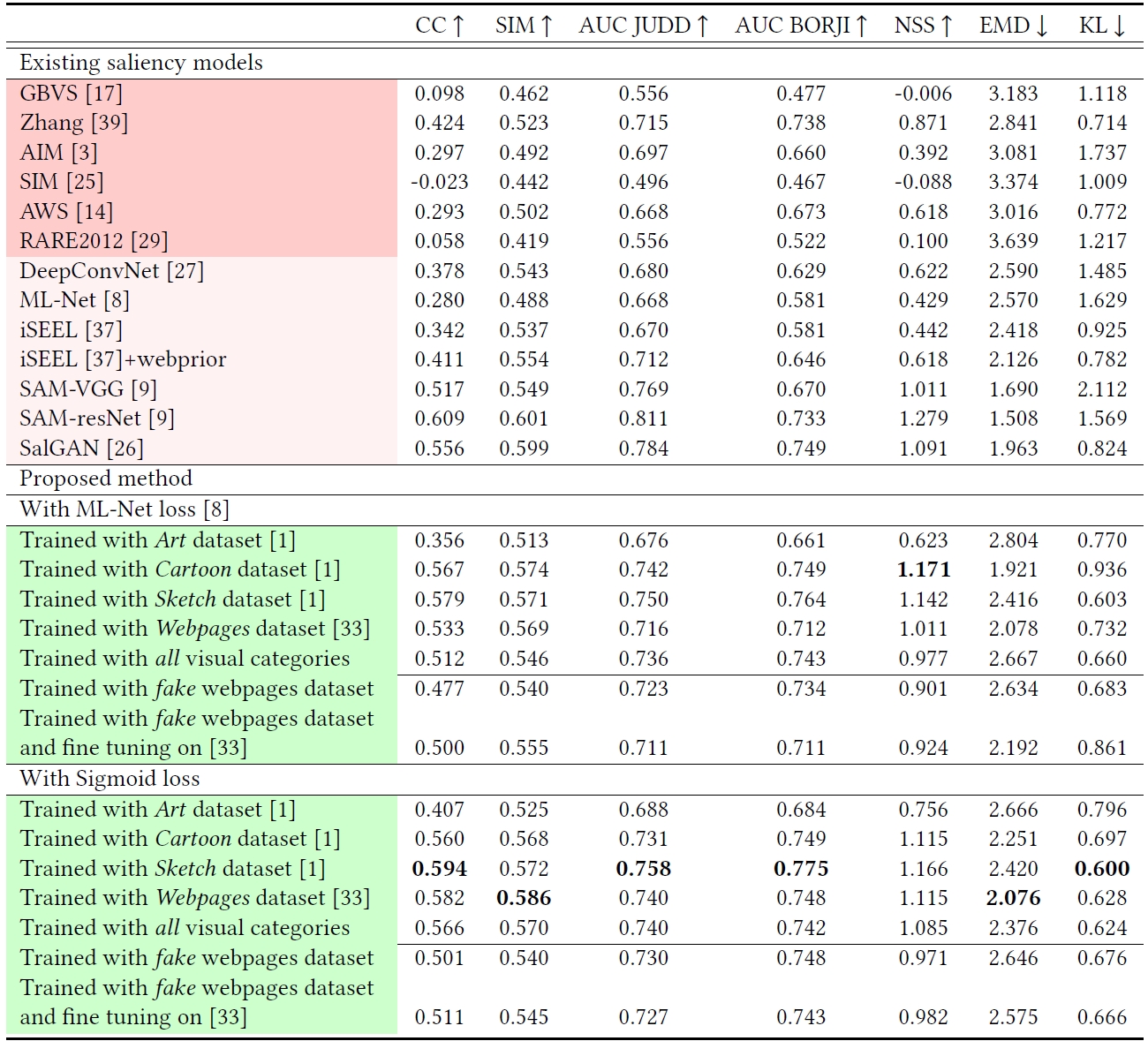

- Performances:

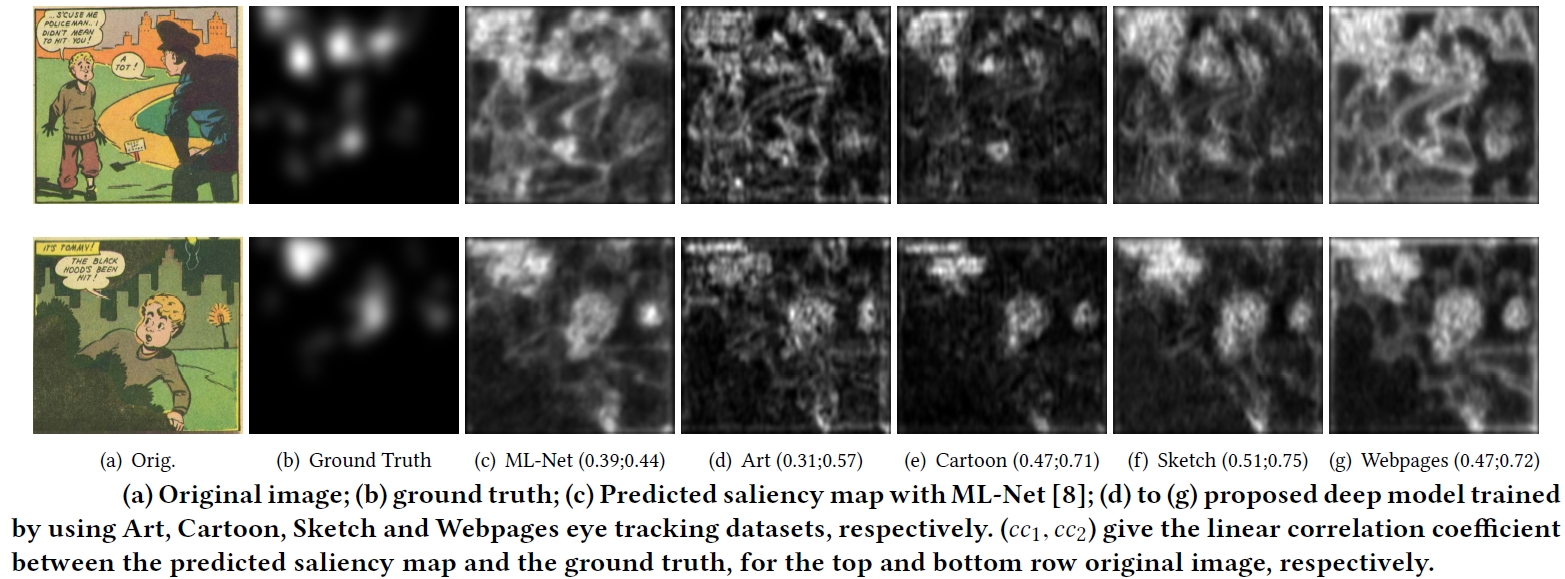

- Predicted saliency maps:

BibTex

Bannier, Jain and Le Meur (2018). DeepComics: Saliency estimation for comics, ETRA, 2018.

@inproceedings{Bannier2018,

Title = {DeepComics: Saliency estimation for comics},

Author = {Bannier, Kévin and Jain, Eakta and Le Meur, Olivier},

booktitle={ACM Symposium on Eye Tracking Research and Applications},

pages={xx--xx},

year={2018}

}